If you’ve ever watched people argue about whether the tool matters or whether it’s all about how you use it, congratulations—you’ve basically attended a family dinner, a group project meeting, and an instructional technology conference… at the same time.

Welcome to the Clark–Kozma debate, the academic version of:

- “It’s not the car, it’s the driver.”

- “Yeah, but the car is a Tesla and it literally drives itself.”

- “Still. It’s not the car.”

- “Okay but—look at the car.”

Now sprinkle AI into this debate, and suddenly everybody’s yelling again, but with nicer fonts and a lot more “paradigms.”

Let’s unpack the argument properly, comprehensively, and with the level of humor appropriate for people who have read too many PDFs.

The Debate in One Meme

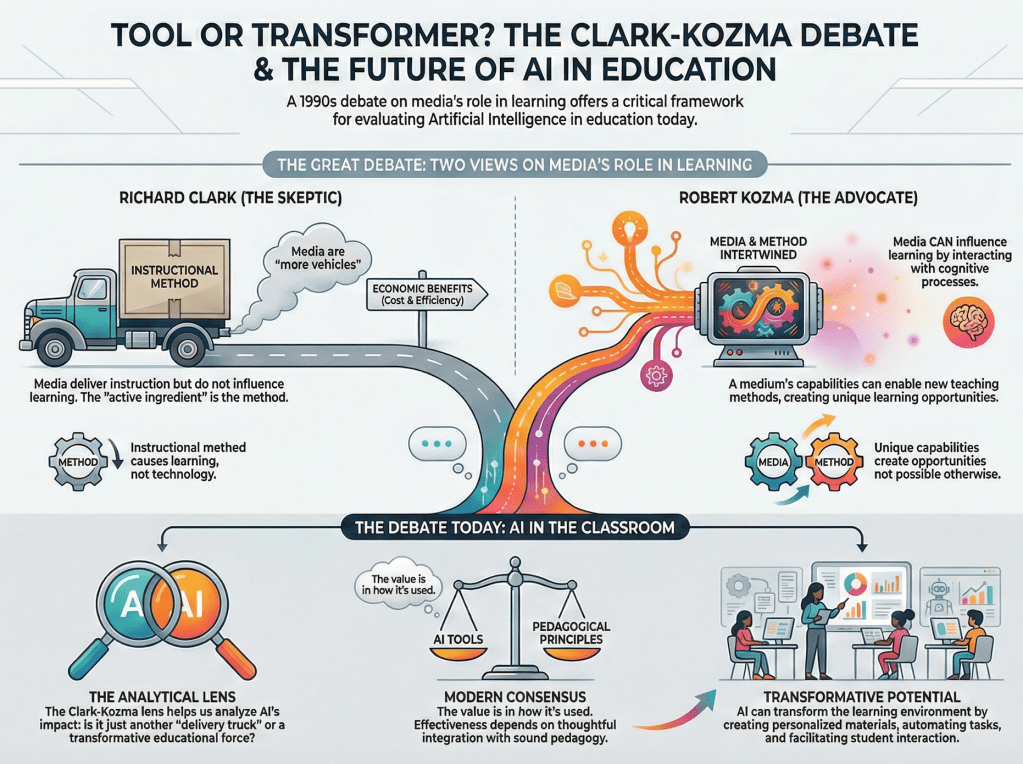

Clark (Team “It’s the pedagogy, bestie”)

Media/technology is just a vehicle that delivers instruction. It doesn’t cause learning. If learning improves, it’s because the method improved—not because you swapped chalk for a touchscreen.

Clark’s vibe:

“If you learned better from a video than a textbook, it’s because the video used better instructional strategies, not because it was a video.”

Kozma (Team “The medium is doing something here!”)

Media/technology can influence learning because different media provide different capabilities (like simulation, visualization, interaction) that shape how learners think, process, and construct knowledge.

Kozma’s vibe:

“You’re not just ‘delivering’ instruction. You’re changing the learning environment—and that can change cognition.”

So Clark says: media don’t influence learning.

Kozma says: media might, under the right conditions.

And then the field spent decades saying:

“Okay… both of you are annoying, but also both of you are right.”

Why This Debate Refuses to Die (Even in 2026)

Because every few years, a shiny new technology shows up like:

- Film 📽️

- Radio 📻

- Television 📺

- Computers 💻

- Online learning 🌐

- VR 🥽

- And now: AI 🤖

And the world says:

“This will revolutionize education.”

Then reality says:

“lol.”

The pattern is so consistent it deserves its own instructional design principle:

The Hype Cycle Principle

- New tech appears

- People declare the end of teachers

- Institutions buy the tech

- Learning outcomes don’t magically improve

- Everyone blames teachers

- Repeat with a newer tech

This is the exact kind of tech-optimism warning the AI + Clark–Kozma editorial highlights—AI is being welcomed with a familiar mix of enthusiasm and skepticism .

Enter AI: The New Kid That Thinks It’s the Teacher

AI isn’t just PowerPoint with confidence.

In online graduate education especially, AI is being framed in two ways:

Version 1: AI as Clark’s “Vehicle”

AI is a tool. It can deliver content, feedback, activities—but it won’t improve learning unless the pedagogy is solid.

Translation:

“AI can’t save your course if your course is trash.”

This view argues that if AI generates poorly designed instruction, learning will still be weak—just faster and with better grammar .

Version 2: AI as Kozma’s “Transformative Partner”

AI changes the learning environment by enabling things that are hard to do otherwise:

- Personalized materials instantly

- Adaptive pacing

- Tailored feedback

- Scalable tutoring-like support

- AI-facilitated discussion tools

In this view, AI isn’t just delivering learning—it’s reshaping the student–faculty relationship into a triad: student + instructor + AI (the “third-party facilitator”) .

And that’s where it gets spicy:

If AI becomes an “equal partner,” what happens to human teaching?

The Online Graduate Education Twist: AI Might Become the Main “Interface”

The editorial raises a very real concern: in online graduate education, because of distance and time separation, AI could become the primary delivery mechanism for content, feedback, and interaction .

That sounds efficient. Also terrifying.

Because graduate education isn’t just:

- content absorption

- quiz completion

- discussion-post manufacturing

Graduate education is also:

- intellectual mentorship

- relational trust

- nuanced feedback

- epistemic growth

- identity formation as a scholar/practitioner

And AI can facilitate… but it can’t fully replicate the “human aspects” of teaching—especially the compassionate, relational side that matters at the graduate level .

So the fear isn’t “AI will destroy learning.”

The fear is more subtle:

AI might optimize graduate education into something that no longer feels like graduate education.

Like turning a seminar into a customer service chat.

So Who Wins: Clark or Kozma?

The editorial basically says:

Neither gets to do a victory lap.

It suggests the field has mostly resolved this debate by conceding something like:

Educational technology success depends on a balance between the tool’s capabilities and the quality of implementation .

Which is academia’s way of saying:

“Both sides, please stop yelling. We have a grant deadline.”

But the editorial insists the question stays critical with AI because AI is not just one more “media.” It’s increasingly embedded in how courses are designed, how feedback is given, and how learners engage.

My Favorite Way to Explain the Debate: The “Chef vs. Kitchen” Analogy

Clark:

“It’s not the kitchen. It’s the chef.”

Give a great chef a stove or a campfire—still great food.

Kozma:

“But the kitchen shapes what you can cook.”

A microwave changes your culinary universe. A sous-vide machine changes it again. A molecular gastronomy lab? Now you’re making edible foam and questioning reality.

AI is the kitchen that also offers suggestions, auto-preps ingredients, and occasionally insists it invented salt.

Clark says: the chef matters.

Kozma says: the kitchen matters.

Reality says: you need both, and also a health inspector.

What This Means for Instructional Design (Yes, We’re Going There)

If you’re designing learning with AI and you only take Clark seriously, you might do this:

✅ “Let’s focus on pedagogy first. AI is optional.”

✅ “Use AI only where it supports existing instructional goals.”

✅ “AI is only as good as the learning design behind it.”

If you only take Kozma seriously, you might do this:

✅ “Let’s redesign the learning environment around what AI makes possible.”

✅ “Let’s use AI to personalize learning pathways.”

✅ “Let’s treat AI as an active element in the learning system.”

But the editorial’s practical conclusion is more grounded:

AI’s value is not in the technology itself but in how it is used .

That’s not a boring conclusion. It’s a dangerous one. Because it means the burden is on designers and instructors to get it right—ethically, pedagogically, and relationally.

The Real Plot Twist: AI Makes “Bad Pedagogy” Faster

The great promise of AI is personalization, feedback, and scale.

The great risk is… personalization, feedback, and scale.

Because now:

- a poorly designed course can be automated at maximum efficiency

- generic thinking can be produced with extraordinary fluency

- discussion can become performance rather than meaning-making

- learning can shift from construction to completion

AI can absolutely help students learn.

But it can also help students produce artifacts that look like learning from 50 feet away.

Graduate education especially cannot survive as a simulation of thinking.

(And yes, I hear the irony. AI wrote that line.)

A “Human-Centered” Ending (Without Getting Preachy)

The editorial warns that AI-driven environments can become overly standardized and mechanized, and that AI may not replicate the nuanced, human relationships central to graduate learning .

So the best takeaway isn’t “AI is good” or “AI is bad.”

It’s this:

AI should be designed to enhance learning without diminishing the human parts of education that matter most. .

In other words, don’t let AI become the main character.

Let it be a powerful supporting actor.

And like any supporting actor, it should:

- follow the script (learning outcomes)

- respect the director (instructional design)

- and not improvise a plot twist that breaks the course.

The One-Liner Summary

Clark: “AI is just a vehicle.”

Kozma: “AI can reshape learning.”

The editorial: “AI can do either—depending on design—and online graduate education must protect the human core while using AI thoughtfully.”

If you want, I can also:

- write a shorter version (LinkedIn-style)

- write a satirical dialogue (Clark vs. Kozma vs. ChatGPT in a classroom)

- or tailor the blog to your own voice (more academic, more casual, more spicy).